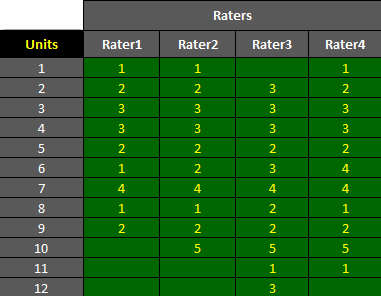

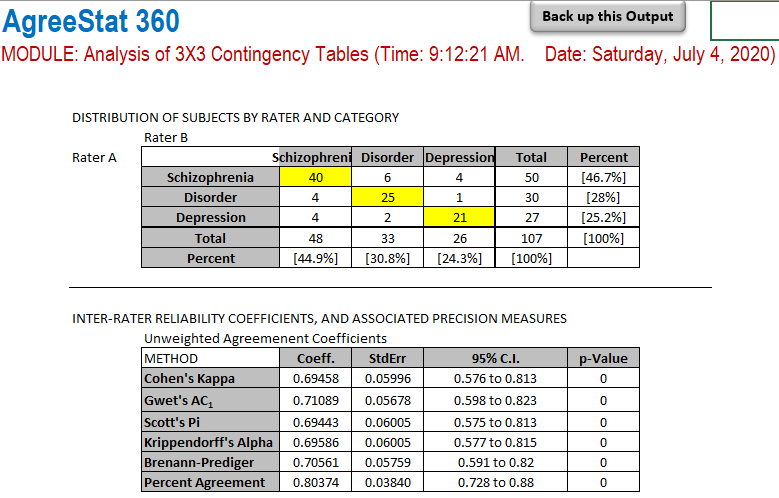

AgreeStat/360: computing weighted agreement coefficients (Conger's kappa, Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) for 3 raters or more

Inter-rater agreement measured using Cohen's Kappa and Krippendorff's... | Download Scientific Diagram

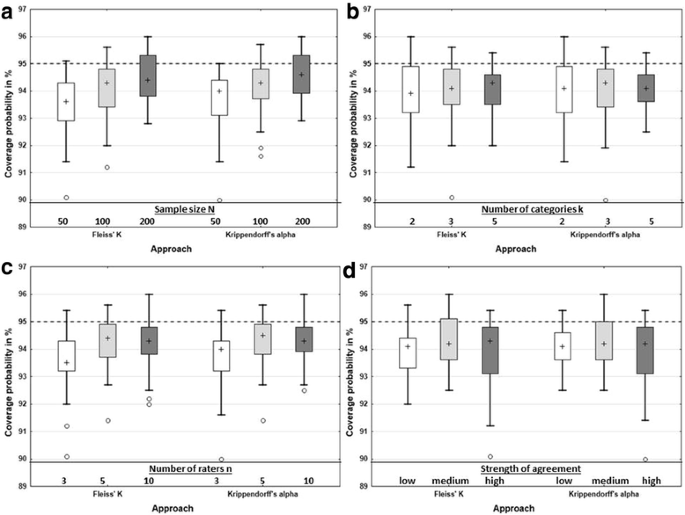

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

K. Gwet's Inter-Rater Reliability Blog : Benchmarking Agreement CoefficientsInter-rater reliability: Cohen kappa, Gwet AC1/AC2, Krippendorff Alpha

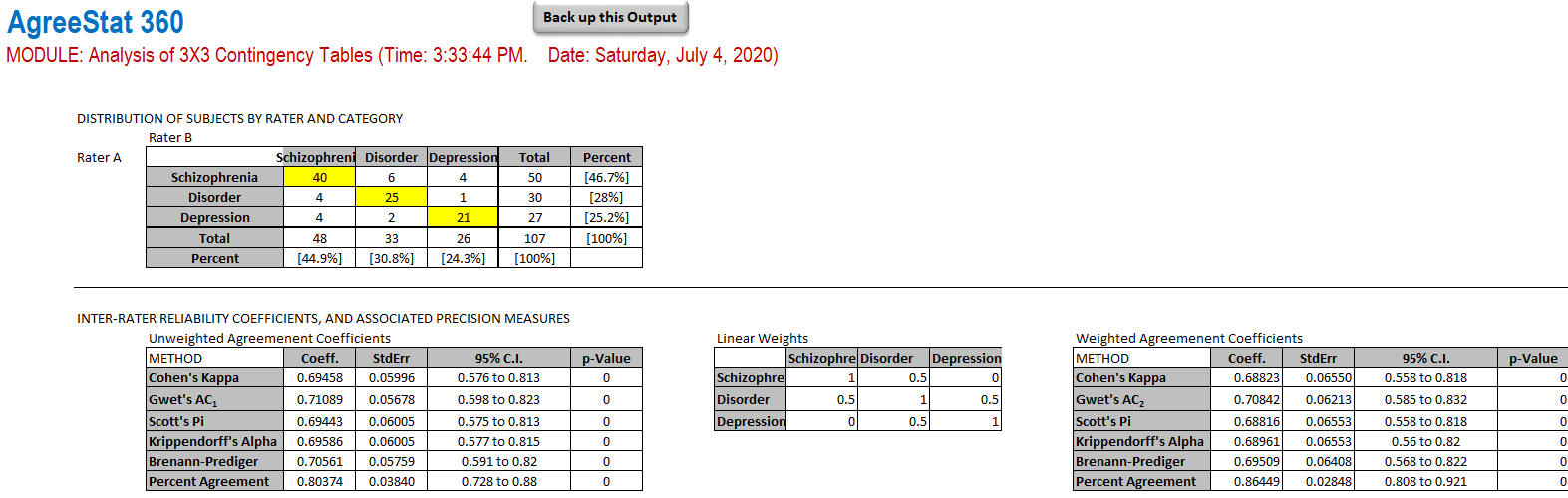

AgreeStat/360: computing weighted agreement coefficients from a contingency table (Cohen's kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more)

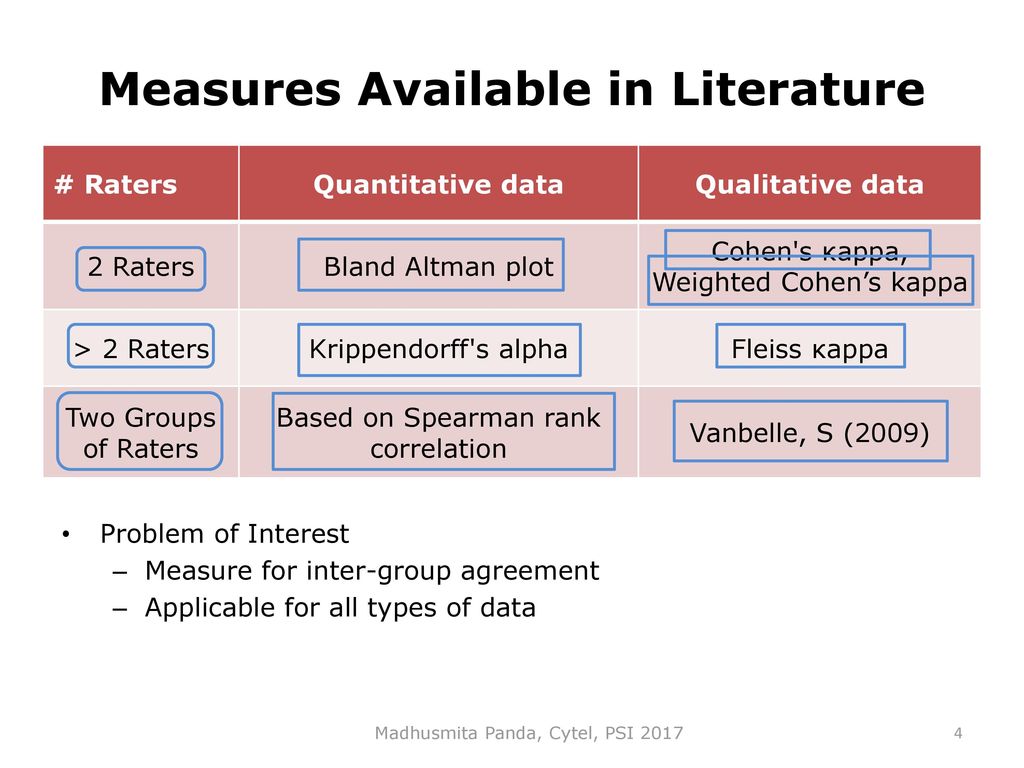

Measuring inter-rater reliability for nominal data – which coefficients and confidence intervals are appropriate? | BMC Medical Research Methodology | Full Text

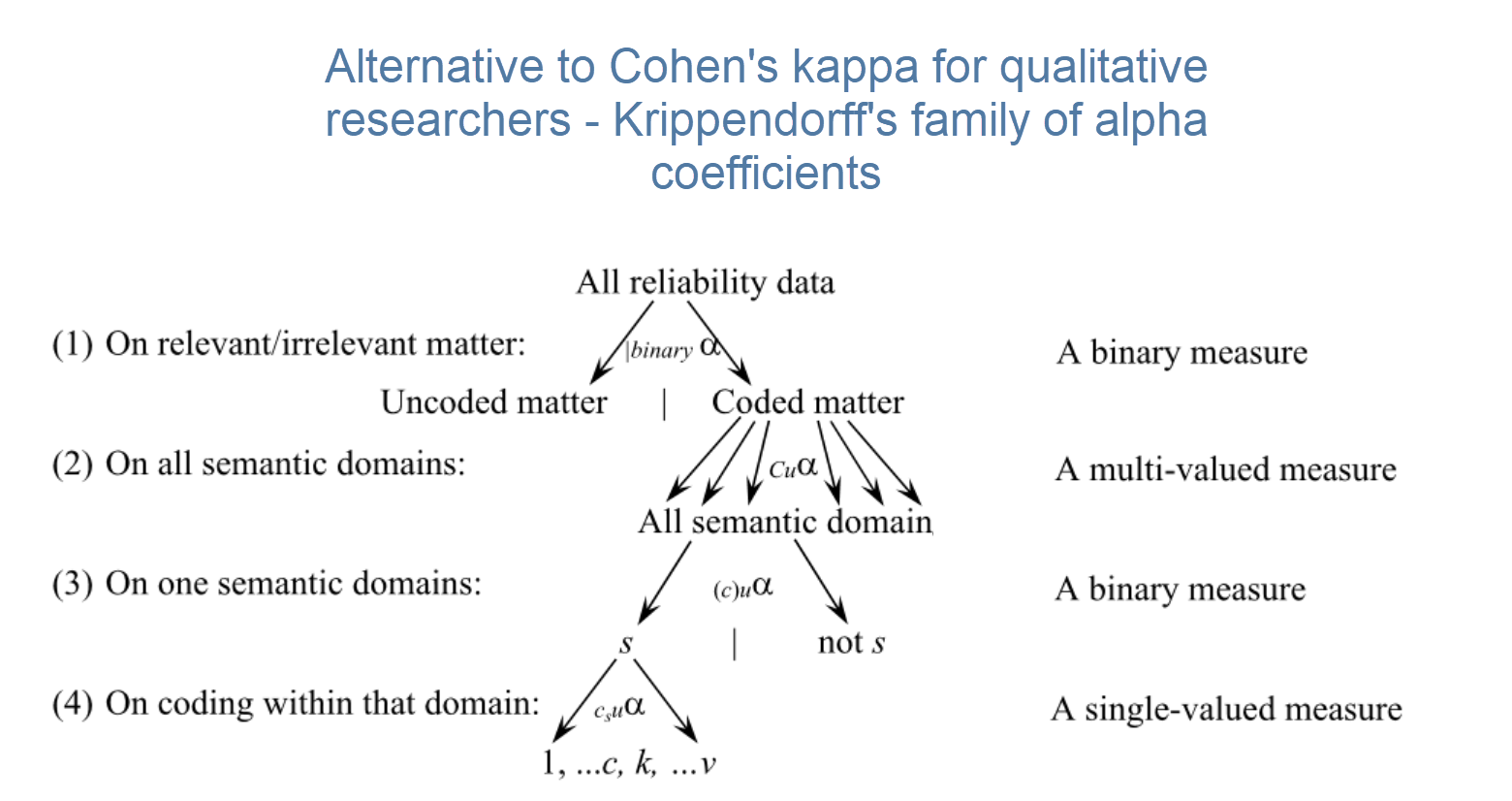

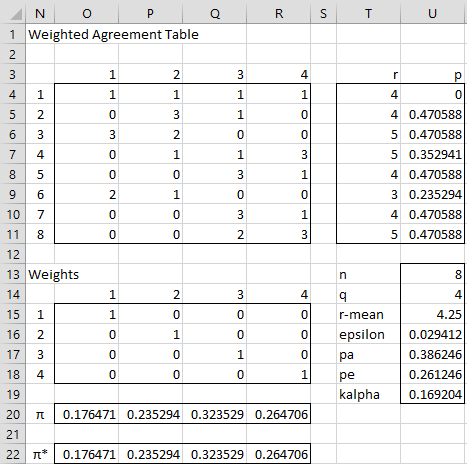

![PDF] On The Krippendorff's Alpha Coefficient | Semantic Scholar PDF] On The Krippendorff's Alpha Coefficient | Semantic Scholar](https://ai2-s2-public.s3.amazonaws.com/figures/2017-08-08/90b246032379c922503fa8cdcfce56435a142148/12-Table4-1.png)

![PDF] On The Krippendorff's Alpha Coefficient | Semantic Scholar PDF] On The Krippendorff's Alpha Coefficient | Semantic Scholar](https://ai2-s2-public.s3.amazonaws.com/figures/2017-08-08/90b246032379c922503fa8cdcfce56435a142148/11-Table3-1.png)